India is building data centres. The economics of AI is pointing beyond earth

At the very moment India is inviting the world’s data centres onto its soil, the logic of where computation belongs is beginning to shift — away from the ground altogether.

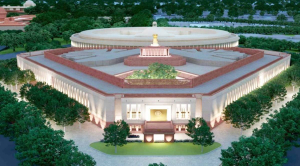

Over the past two years, India has moved decisively to position itself as a global hub for digital infrastructure. States are competing to attract data centres with land allocations, power assurances, and expedited approvals. Industrial groups are committing billions to expand capacity. The argument is straightforward: in an AI-driven economy, hosting compute is a source of long-term economic and strategic advantage.

That argument still holds. But it rests on an assumption that is becoming less certain — that the future of large-scale computation will remain anchored to Earth.The constraint behind AI infrastructure today is not demand. It is the cost of sustaining that demand within physical systems that were never designed for it.

Electricity consumption is the most visible symptom, but it is not the most important one. According to the International Energy Agency, data centres already consume roughly 415 terawatt-hours annually — about 1.5–2 per cent of global electricity demand — and that share is rising. Yet the real pressure is not global — it is local. AI workloads are clustering in specific regions, pushing grids, cooling systems, and land availability to their limits.

What appears as growth at the global level behaves as saturation at the regional level. In major hubs, data centres are no longer just large consumers of electricity; they are becoming dominant ones. In Northern Virginia, now the world’s largest data centre cluster, utilities are already warning of grid strain, while cities like Amsterdam and Singapore have imposed restrictions on new capacity. Governments are beginning to slow approvals — not because capital is unavailable, but because infrastructure cannot keep pace.

AI demand compounds annually. Infrastructure expands in steps. Power grids take years to build. Transmission equipment faces multi-year supply constraints. Cooling depends on water resources that are increasingly scarce. Land near high-capacity transmission corridors is finite and contested.

The result is a simple but underappreciated change: the cost of adding the next unit of compute is no longer determined primarily by chips or capital. It is determined by everything around them. For a time, this pressure can be managed geographically. Workloads move to regions with cheaper power.

Data centres are colocated with energy generation. Hyperscale firms negotiate directly with utilities.

This isn’t a long-term fix. It rearranges the problem within the same limits. Even with advances in efficiency and alternative energy, the rate of AI demand growth continues to outpace the speed at which terrestrial infrastructure can adapt. And when a problem cannot be solved inside a system, the system itself stops being sufficient. This is where the conversation begins to change.

For decades, the idea of putting large-scale computing in space was dismissed. The costs made it impractical, and launch expenses kept computation firmly on Earth. That assumption is now starting to crack.Launch costs have fallen from over $20,000 per kilogram in the Shuttle era to roughly $2,000 — $3,000 today on reusable systems, with further reductions anticipated. Companies like SpaceX are pushing this curve downward, while firms such as Axiom Space, alongside initiatives within NASA and the European Space Agency, are already exploring the architecture of orbital computing.

This does not make space inexpensive. But it brings it into a range where it can be compared — not to ideal conditions on Earth, but to increasingly constrained ones. And that comparison is where things become interesting. Because space changes the constraint equation in ways that align directly with the pressures now facing AI. Energy in orbit is not tied to terrestrial grids. Solar generation operates with near-continuity, largely unaffected by weather and far less constrained by day-night cycles. For compute systems that require sustained, high-density power, this is not a marginal advantage. It is a structural one.

Cooling, one of the most resource-intensive aspects of data centres, can be handled through radiative heat dissipation into space. On Earth, cooling consumes both energy and water, often becoming a limiting factor in where infrastructure can be deployed.

And perhaps most importantly, space removes the constraint of land. There are no zoning restrictions, no local opposition, no competition for high-capacity transmission corridors.

The constraints do not disappear. They change — from geography to engineering. This distinction matters because not all computing workloads are equally sensitive to location. The most energy-intensive layers of the AI stack —training large models, running simulations, processing massive datasets —are already geographically flexible. They are not latency-sensitive. They are driven by throughput.

These are also the workloads placing the greatest strain on terrestrial systems.To be clear, this does not imply that AI is about to “move to space” in any complete sense. Real-time applications, inference systems, and user-facing services will remain Earth-bound. Bandwidth limitations and latency make that unavoidable.But the transition does not need to be complete to be consequential.

Even a partial migration of the most energy-intensive workloads would alter the economics of AI infrastructure. These workloads account for a disproportionate share of energy consumption. Moving them—even incrementally—would reduce pressure on terrestrial grids and change where future capital is deployed.

And that is where India’s current strategy becomes more complex.India is expected to add several gigawatts of data centre capacity this decade, backed by tens of billions in committed investment. This expansion is built on the assumption that demand for compute will continue to grow and remain geographically anchored.

But infrastructure investments are not short-term decisions. They are built on expectations that extend over decades. If the structure of demand begins to shift—even gradually—those expectations begin to change.

This is not a collapse scenario. It is a repricing.And repricing is how large economic misallocations emerge—not through sudden failure, but through slow divergence between where capital is deployed and where value ultimately migrates.There is also a deeper structural implication.On Earth, computing infrastructure is concentrated but still geographically distributed. In space, access depends on a narrower set of capabilities: launch systems, orbital operations, and in-space assembly.These capabilities are not widely distributed.The result is not decentralization. It is consolidation at a higher layer.The competitive advantage in AI is no longer defined solely by algorithms or data. It is increasingly defined by infrastructure—by who can access environments where computation can scale without the constraints of terrestrial systems.

If that environment begins to extend beyond Earth, then the geography of advantage changes with it.For India, this creates a strategic tension.The country is right to invest in digital infrastructure. It needs more compute, more capacity, and stronger domestic ecosystems. But if the global frontier of AI infrastructure begins to shift—even partially—toward non-terrestrial environments, then a strategy built entirely on ground-based expansion risks becoming incomplete.

This is not an argument against building data centres. It is an argument against assuming that data centres, as they exist today, will remain the final form of AI infrastructure.

There are real risks in moving infrastructure to space. Orbital congestion is increasing. Debris is a growing concern. Governance frameworks are still evolving. And building systems beyond Earth introduces new technical and geopolitical dependencies.But technological transitions are rarely stopped by risk alone. They are shaped by changes in relative efficiency.The relevant question is not whether space is ideal. It is whether, at the margin, it becomes more efficient than building the next data centre on Earth.

That moment will not arrive with a headline. It will appear gradually—in cost curves, in investment decisions, and in where the most energy-intensive workloads are actually deployed.And by the time it becomes obvious, the advantage will already have shifted.India is right to participate in the AI infrastructure race. But participation is not the same as positioning. Because the risk is not that India builds too much. The risk is that it builds for a version of the future that is already beginning to move beyond the ground beneath it.

Author is a theoretical physicist at the University of North Carolina at Chapel Hill, US, and the author of the forthcoming book The Last Equation Before Silence; views are personal